I’ve seen a lot of reactions to the tragedy of the celebrity photo plundering that affected Jennifer Lawrence, Kate Upton, and many offers. Some condemn the celebrities who had taken nude photos and videos of themselves. Some condemn the culture of men who objectify women. Some condemn cloud computing. Some condemn the people who view the photos. Some condemn people with poor computer security.

I see all of these perspectives, but I think we’re also missing something bigger. To get there, I’m going to start with a story that takes place in 1993.

I was a graduate student at the University of Arizona studying computer science. It was a great place to be. Udi Manber was a professor working on agrep and glimpse, long before he become the head of search at Google. Larry Peterson had developed x-kernel, an object-oriented framework for network protocols. Bob Metcalfe, inventor of Ethernet, dropped by one day to review what we were doing with high speed networking.

I’ll never forget the uproar that occurred when we received a new delivery of Sun workstations. I think it was the Sun SPARCstation 5, although I could be wrong. But what was different about these computers was that they contained an integrated microphone. And that meant anyone who could get remote access to the software environment could listen in to that microphone from anywhere in the world.

Keep in mind that the owners of these computers were not technical novices. These were the people creating core components of what we use today: everything from search to TCP optimized for video. And they were damn nervous about people hacking into the computers and listening in to the microphone from anywhere.

Fast-forward about seven years, and I’m reading a series of books about cultural anthropology. (Yes, this is relevant. And if you’re interested, Cows, Pigs, Witches and War by Marvin Harris is a great starting point.) I might be a little loose on the specific details, but the gist of what I read is that when scientists studied indigenous tribes relatively untouched by modern culture they found that “crime” occurs at a similar rate across most tribes. That is, norms might differ from culture to culture, but things like murder and stealing happen in all tribes, and at similar frequencies. Tribal culture doesn’t have prison, so the punishment is being cast out of the tribe. Without getting into details, this is actually a quite strong punishment. Not only is social rejection itself powerful, but the odds of survival go down dramatically without the support structure of a tribe.

Now let’s ground ourselves back in the current day. What happened to Jennifer Lawrence, Kate Upton, and many others is terrible. However, this isn’t an isolated occurrence. Stealing photos – and worse, much worse – occurs all the time, to many women, and we’re just not hearing about it because they aren’t famous.

This 2013 ArsTechnica article, Meet the men who spy on women through their webcams (caution: may contain triggers), is probably the best overview on the subject of Ratters. The term is an extension of Remote Administration Tool (RAT). These are men (almost always men) who prey on women (almost always women) by first gaining access to their computers, then spying on them through their webcams, in the privacy of their own home, as well as going through their computer to find photos and videos. Eventually, compromising videos and photos exist, whether they are found on the filesystem or recorded by the ratter using the webcam. The ratter then uses the threat of sharing those compromising photos and video to blackmail the victim into recording yet more explicit videos.

Certainly ratters are awful people who deserve to go to jail for their crimes. And equally certainly there are great improvements we can make in our society in terms of how men treat and view women. And we can make improvements in our personal computer security.

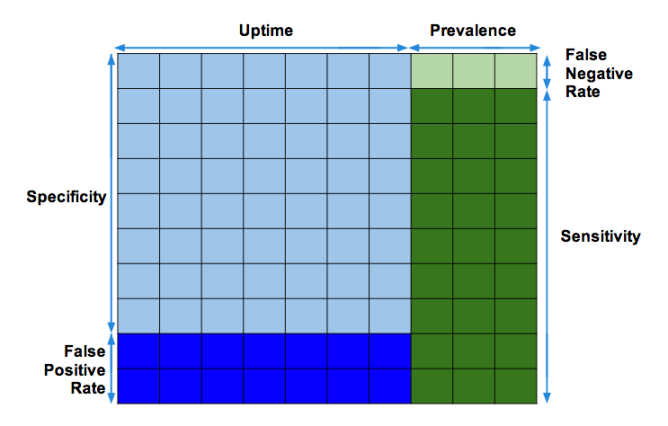

However, if the tribal studies tell us that crime still occurs at a relatively constant rate, and if even some of the most technically sophisticated people fear their microphones being used to spy on them, then we know that neither criminal deterrents nor improvements in our personal computer security practices are going to be sufficient to completely stop such behavior.

So then what?

Well, now we come back to what Cory Doctorow frequently argues. Computer laws such as those around DRM inhibit computer researchers from making improvements into computer security, by making it illegal to reverse engineer how certain bits of code work. Spyware that originates from governments, corporations, and school districts is frequently subverted by computer hackers and ratters (in addition to being abused by the originators as well.)

Cory has also said that computer security and privacy is like potable water: With enough effort, individuals can capture, treat, and store their own independent water supply. But as a society, it’s far more efficient for the government to provide guaranteed drinkable water through municipal water supplies. Similarly, an individual might take heroic measures to ensure their security and privacy: long passwords, no cloud services, cover their webcams, avoid the internet whenever possible. But how feasible is it for every person to do this? And can we all maintain that level heroic effort? Probably not.

What we need is change at the highest level.

We need our governments to stop perpetuating the problem by spying on us, and instead take our privacy and security seriously. Instead of DRM, give us privacy. Instead of school districts spying on us, give us privacy. Instead of buying spyware from corporations to spy on us, make selling software that spies on us illegal.

Privacy and security is a problem that affects all of us, not just the celebrities that are the latest and most visible in a long series of victims.