As I mentioned in my last blog post, I recently had the privilege of seeing and talking with Ward Cunningham, inventor of the wiki. In 1995 he built the first wiki as a tool for collaboration with other software developers and created the Portland Pattern Repository.

Virtually everyone is familiar with wiki at this point. It’s the web you can edit. Wiki reached its maximum reach with the creation of Wikipedia. For a wiki to work well, it is essential that there is a motivated critical mass of participants maintaining the wiki. For Ward’s original Portland Pattern Repository wiki, the motivation for users was to advance the way software was developed. For Wikipedia contributors, the motivation is to build a comprehensive encyclopedia of knowledge. Both of these goals are important to their relative population of users. Wikipedia contributors for example, may spend dozens of hours per week in unpaid work to make Wikipedia a better encyclopedia.

Why is a motivated critical mass of contributors so important? Some of the key contributions a wiki needs to thrive :

- the contribution of original material

- improving or correcting material

- building linkages between topics in the wiki

- various forms of wiki gardening that include:

- ensuring topics conform to good style

- correcting mistakes

- building and improving trailheads and maintaining trails

- filling in critical missing gaps

- removing spam and user errors (such as when a user accidentally deletes content from a post)

- and monitoring changes to spot when any of the of the above gardening is needed

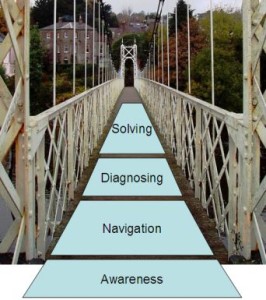

What if you want a wiki but lack a sufficiently large, sufficiently motivated group of contributors?This is a problem I’ve been thinking about for some time. Derek Powazek says that what we need to do is “smallify the task“. In part that can be done by breaking down big tasks into smaller tasks. It can also be achieved by finding way to eliminate some of the bigger tasks.

In that case, could the gap between the minimum critical mass and motivation needed and whatever actual user population you might have, be at least partially mitigated by some kind of automation or collective intelligence? In other words, could some of the work normally done through the explicit contributions of a small group of committed users be instead done through implicit feedback and machine intelligence? Below I’ve captured my thinking in this space.

Let’s start by assuming that, at a minimum, raw contributions would still have to come from people, as would corrections to the material. But what we could simplify building linkages, improving trailheads in maintaining trails, spotting and removing bad mistakes or spam?

Automating linkages

Wiki topics are frequently characterized by many hyperlinks between the topics. In fact, the rich hyperlinking between topics is very much a part of what makes wiki is so effective. However chasing down all the right links between topics can add to the effort of writing a topic in the first place, or maintaining the wiki over time.

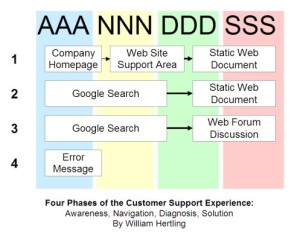

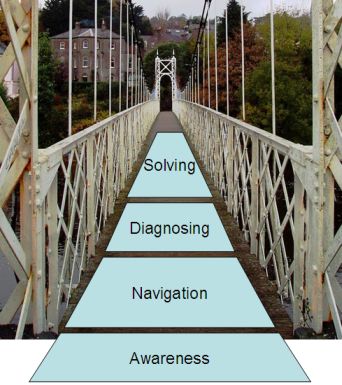

There is a technique that can be used to create a list of suggested topics that are related in some way to the topic a user is currently viewing. This technique relies on observing the behavior of previous users to determine what topics those users viewed, and in what order. For example, even if there isn’t a rich set of hyperlinks, a small subset of users will be motivated enough by their need for information to seek out other topics. They might do this by using search, the recent changes page on a wiki, or by navigating a convoluted set of hyperlinks to get to an ultimately useful destination.

The analysis then consists of using the clickstream data of these visitors to determine, for any given page A, what other pages also seem useful, considering for example, the amount of time spent on each page, and the order in which they were visited. For example, if I view the clickstream data, I may see a number of users who visit topic A, then go on to visit some intermediate topics very briefly, and then spends a significant amount of time reading topics D and G. I may then conclude that for other visitors who read topic A, they may also be interested in topics D and G.

We would need to user interface of the wiki to support presenting a list of recommended topics. Then when any visitor views the topic A page, the wiki can present recommended links for topics D and G. This makes it more likely that subsequent users are able to find related topics without going through search, recent changes, or long navigation paths. The net effect is pretty similar to the effect achieved in good wiki gardening when topics are appropriately hyperlinked together. But since this technique can be automated, it becomes possible to increase the usefulness of the wiki, while decreasing the effort needed to maintain it.

Automating Trailheads

A similar technique can be used to create and improve trailheads. The term trailhead as used for wikis comes from the trailheads associated with hiking trails. Typically there may be a large, interlinked network of hiking trails. A hiking trailhead is a place to enter the network of hiking trails. In a hiking trailhead there is frequently has a map indicating what trails exist and how they connect. A wiki trailhead performs a similar role. For someone coming to the wiki from the greater Internet, the wiki trailhead helps them orient themselves to the organization of information on the wiki and decide where and how to start reading through the wiki based on their interests.

Increasingly, people get to destination websites from search engines such as Google. While Google is frankly amazing at matching search terms to useful webpages, it can sometimes drop you into the middle of the website experience. That is, while the destination page may be the one with the content that most closely matches the search term, there may be very useful and relevant information on other pages that are related to the current page. And it may not be obvious how to navigation to those other pages. This is very similar to the related topic analysis I described above.

However in this context, we have additional information that can be used to better predict what other pages or topics the user will be interested in. When Google, or another search engine, sends a visitor to our site, the referral field will tell us the URL that the user came from. For search engines, this referrer URL will include the search term the user was searching for. This means that when we do click stream analysis and analyze how users visit the pages on our site, we can determine not just that readers of topic A are likely to be interested in topics D and G, but that if a reader of topic A comes to our site from a search engine having searched for a given term S, that then they are most likely to be interested in topic G, but not D. This adds a level of refinement to our basic predictive algorithm and create a better experience for users who come to our website from search engines.

Automating Weeding

We can also borrow a technique from sites such as Engadget, Gizmodo, and Slashdot to make spotting and removing bad content or spam much easier. The comments on Engadget and Gizmodo can be rated by viewers with +, -, or !. The plus means the comment is good, the minus means the comment is bad, and the exclamation point means the reader wants to “report the comment”, such as for bad language or spam. Many other sites utilize similar techniques for comments, and discussion threads. Highly rated comments either float to the top, or get represented in bold, or otherwise stand out. Low rated comments float to the bottom, get grayed out, or otherwise are diminished in importance. Reported comments may vanish from the site entirely. All of this happens with no manual intervention. Instead, it relies on minimal input from many users.

A similar technique could be used on a wiki. If we allow users to rate a topic, or even sections within a topic, with a plus, minus, or to report it, then we can again apply some automated analysis to determine what to do. Topics that are “reported” by a certain percentage of viewers should relatively quickly go away (with repeated occurences by the same contributor ultimately resulting in banning the contributor altogether). Topics that are rated down by a certain percentage of viewers should diminish in importance-which could be indicated by: the way the topic is displayed (perhaps with grayed out text), eliminating incoming links to the topic, or removing the topic entirely. Or perhaps, if there is another similar topic that is highly rated, perhaps the highly rated topic replaces the lowly rated topic.

Automating Good Style

Another area that gardeners of a wiki spent considerable time on is ensuring that pages conform to good style. Good style may vary by wiki and by group of collaborators, but essentially it is the conventions that ensure a certain degree of uniformity, usefulness, and accessibility of the information contained in wiki topics. It varies by group because different groups have different goals: Wikipedia’s contributors are historians, and they seek to document things from a neutral point of view. The Portland Pattern Repository’s contributors were software developers who were activists for a software development methodology, and they sought discorse and understanding.

A form of template, or gentle guidance, could help ensure that pages conform to good style without manual intervention. For example, a wiki that contains troubleshooting information might guide the user contributing a new topic towards organizing their information as a problem statement, and then series of solutions steps. Subsequent contributors for the topic might be guided to add comments on a section, add solutions to a problem, or qualify a solution step with the particular set of problem conditions.

The trick would be to balance this guidance with the necessary freedom to ensure that users are not too constricted in their options. Systems that are too constricted would likely suffer from several problems. One problem is that the site would not appear alive in the way that wikis frequently appear alive. (By comparison, Sharepoint sites are highly constricted in what information can be place where, and they never display the sense of liveness that a wiki does.) Another problem is that contributors may feel stifled by the restrictions placed on them and choose either not to contribute at all, or not to contribute with their full creativity and passion. I can’t quite envision exactly how this guidance would work, but if it could be figured out, it would go a long way to further reducing the maintenance workload of the wiki.

In summary, what I’m trying to envision is a next-generation wiki that combines the editable webpage aspect of any other wiki, with collective intelligence heuristics that build upon the implicit feedback of many users to replace much of the heavy lifting required in the maintenance of most wikis. This will be useful anytime the intended users of a given wiki are not likely to have a critical mass of motivated contributors. It will not substitute for having no contributors, and it will not work in the case of the wiki with very few users (such as a wiki used by a single workgroup inside a closed environment). But it may help those groups that are on the borderline of having a critical mass of contributors, and have a sufficient mass of readers.

I’m very interested in hearing reactions to this concept, and of learning of any efforts in this direction with wikis currently.

*Note: This post was updated 4/9/2009 in response to feedback. It is largely the same content with some additional clarifications. — Will Hertling