|

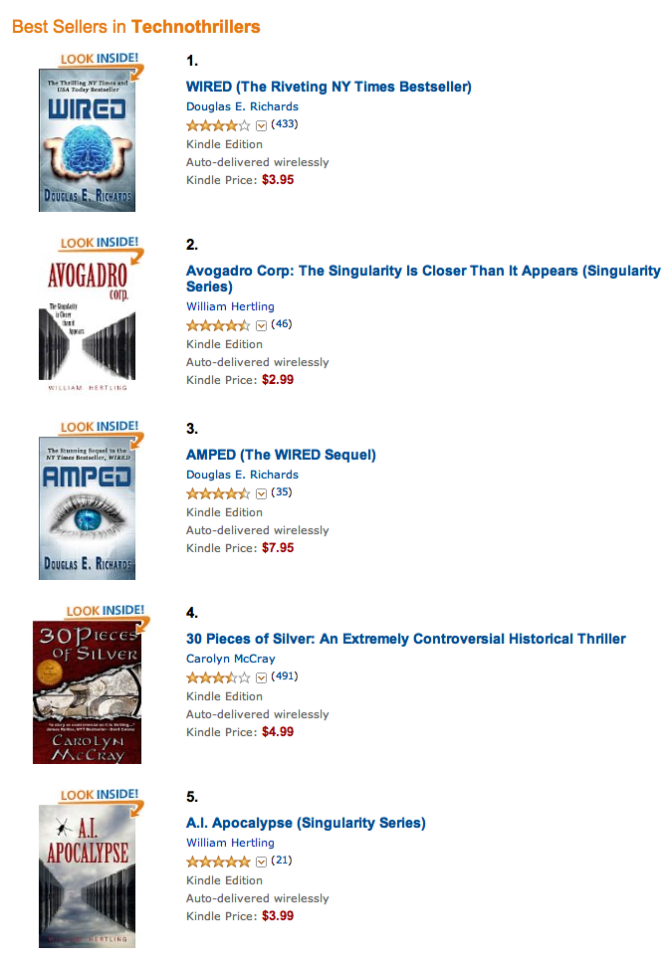

| Both books catapulted to the top of the technothrillers category this week. Thank you to everyone who bought a copy, posted reviews, or told friends! |

July News: Bestsellers, Smashwords, Robot Videos

|

| Both books catapulted to the top of the technothrillers category this week. Thank you to everyone who bought a copy, posted reviews, or told friends! |

If you’re going to be at SXSW Interactive next week, I hope you’ll join us at Wall-E or Terminator: Predicting the Future of AI.

Daniel H. Wilson (author of Robopocalypse, upcoming AMPED), Chris Robson (chief scientist at Parametric Marketing), and myself will be speaking about whether there’s going to be a singularity, when it would happen, if ever, and whether that’s even a relevant question to be talking about.

Daniel and Chris are absolutely brilliant, and I can promise this will be a fun and informative discussion. If our previous discussions are any indication, I can promise we’ll all bring very unique points of view to the debate, er panel.

You’ll find us here:

Tuesday, March 13, 2012

9:30AM -10:30AM

Hilton Austin Downtown

Salon J

By the way, if you’re there, and want to get a hold of me, twitter is usually the best way. You’ll find me at @hertling. You can find Daniel at @danielwilsonpdx, and Chris at @paramktg.

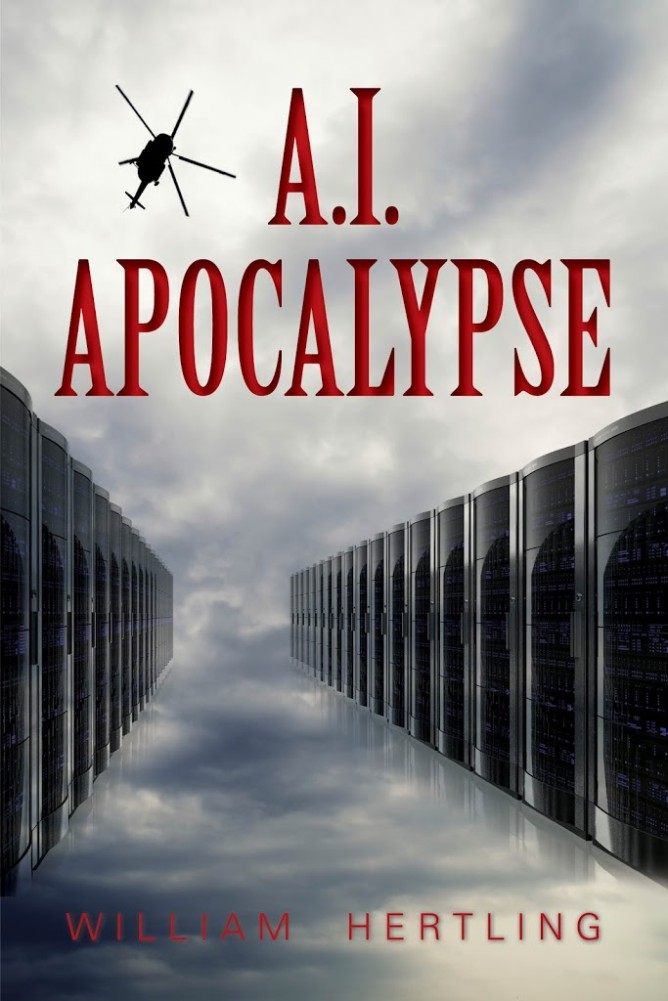

A.I. Apocalypse, the sequel to Avogadro Corp, is now available on Amazon!

|

| A.I. Apocalypse Sequel to Avogadro Corp |

A little bit about A.I. Apocalypse:

Leon Tsarev is a high school student set on getting into a great college program, until his uncle, a member of the Russian mob, coerces him into developing a new computer virus for the mob’s botnet – the slave army of computers they used to commit digital crimes.

The evolutionary virus Leon creates, based on biological principles, is successful — a little too successful. All the world’s computers are infected. Everything from cars to payment systems and, of course, computers and smart phones stop functioning, and with them go essential functions including emergency services, transportation, and the food supply. Billions of people may die.

But evolution never stops. The virus continues to change, developing intelligence, communication, and finally an entire civilization of A.I. called the Phage. Some may be friendly to humans, but others most definitely are not.

Leon and his companions must race against time and the bungling military to find a way to either befriend or eliminate the Phage and restore the world’s computer infrastructure.

A.I. Apocalypse is the second book of the Singularity Series. It’s available now for the Kindle, and will be available in print and additional electronic versions in June. Buy it today!

Oh boy, imagine a bunch of these things chasing after you. Between the sound and the formation flying, they are pretty scary.

Recently people have been saying nice things about my writing.

But then I mentioned a book I’d just read called Avogadro Corp. While it’s obviously a play on words with Google, it’s a tremendous book that a number of friends had recommended to me. In the vein of Daniel Suarez’s great books Daemon and Freedom (TM), it is science fiction that has a five year aperture – describing issues, in solid technical detail, that we are dealing with today that will impact us by 2015, if not sooner.

There are very few people who appreciate how quickly this is accelerating. The combination of software, the Internet, and the machines is completely transforming society and the human experience as we know it. As I stood overlooking Park City from the patio of a magnificent hotel, I thought that we really don’t have any idea what things are going to be like in twenty years. And that excites me to no end while simultaneously blowing my mind.

You can read his full blog post. (Thank you, Brad.)

While I loved the endorsement, what really got me excited is that Brad appreciated the book for exactly the reasons I hoped. Yes, it’s a fun technothriller, but really it’s a tale of how the advent of strong, self-driven, independent artificial intelligence is both very near and will have a very significant impact. Everything from the corporate setting and the technology used should reinforce the fact that this could be happening today.

I first got interested in predicting the path of technology in 1998. That was the year I made a spreadsheet with every computer I had owned over the course of twelve years, tracking the processor speed, the hard drive capacity, the memory size, and the Internet connection speed.

The spreadsheet was darn good at predicting when music sharing would take off (1999, Napster) and video streaming (2005, YouTube). It also tells when the last magnetic platter hard drive will be manufactured (2016), and it predicts when we should expect strong artificial intelligence to emerge.

There’s lots of different ways to talk about artificial intelligence, so let me briefly summarize what I’m concerned about: General-purpose, self-motivated, independently acting intelligence, roughly equal in cognitive capacity to human intelligence.

Lots of other kinds of artificial intelligence are interesting, but they aren’t exactly issues to be worried about. Canine level artificial intelligence might make for great robot helpers for people, similar to guide dogs, but just as we haven’t seen a canine uprising, we’re also not likely to see an A.I. uprising from beings of that level of intelligence.

So how do we predict when we’ll see human-grade A.I.? There’s a range of estimates for how computationally difficult it is to simulate the human brain. One estimate is based on the portion of our brain that we use for image analysis, and comparing that to the amount of computational power it takes to replicate that in software. Here’s the estimates I like to deal with:

| Estimate of Complexity | Processing Power Needed | How Determined |

|---|---|---|

| Easy: Ray Kurzweil’s estimate #1 from Singularity Is Near | 10^14 instructions/second | Extrapolated from the weight of the portion of the brain responsible for image processing to, compared to the computer computation necessary to recreate. |

| Medium: Ray Kurzweil’s estimate #2 from Singularity Is Near | 10^15 instructions/second | Based on the human brain containing 10^11 neurons, and it taking 10^4 instructions per neuron. |

| Hard: My worst case scenario: brute force simulation of every neuron | 10^18 instructions/second | Brute force simulation of 10^11 neurons, each having 10^4 synapses, firing up to 10^3 times per second. |

(Just for the sake of completion, there is yet another estimate that includes glial cells, which may affect cognition, and of which we have ten times as many as neurons. We can guess that this might be about 10^19.)

The growth in computer processing power has been following a very steady curve for a very long time. Since the mid 1980s when I started listening to technology news, scientists have been saying things along the lines of “We’re approaching the fundamental limits of computation. We can’t possibly go any faster or smaller.” Then we find some way around the limitation, whether it’s new materials science, new manufacturing techniques, or parallelism.

So if we take the growth in computing power (47% increase in MIPS per year), and plot that out over time, we get this very nice 3×3 matrix in which we can look at the three estimates of complexity and three ranges for the number of available computers to work with:

| Number of Computers | Easy Simulation (10^14 ips) |

Medium Simulation* (10^16 ips) |

Difficult Simulation (10^18 ips) |

|---|---|---|---|

| 10,000 | now | 2016 | 2028 |

| 100 | 2016 | 2028 | 2040 |

| 1 | 2028 | 2040 | 2052 |

By the way: If you find this stuff interesting, researcher Chris Robson, author Daniel H. Wilson, and I will be discussing this very topic at SXSW Interactive on Tuesday, March 13th at 9:30 AM.

Very eerie video on how video recognition algorithms see the world. From Timo Arnall:

How do robots see the world? How do they gather meaning from our streets, cities, media and from us?

This is an experiment in found machine-vision footage, exploring the aesthetics of the robot eye.

Robot readable world from Timo on Vimeo.

The Singularity Institute has posted a comprehensive list of all Singularity Summit talks with videos of each talk. A few that I’m particularly interested in: